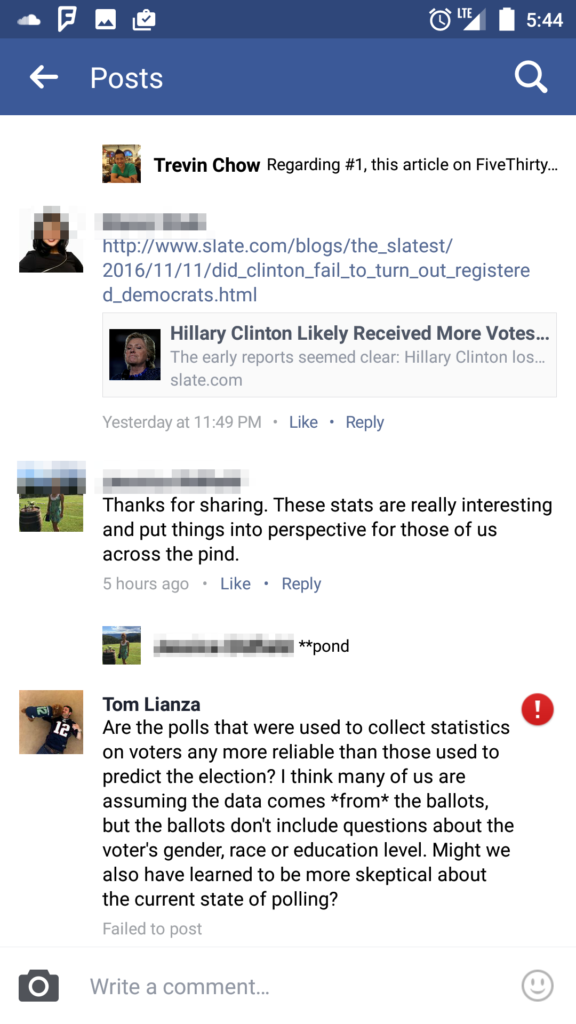

This morning I read an interesting post from my friend Trevin about the election and wrote a comment in response. His post was thoughtful and not at all combative, in part expressing surprise at the low voter turnout and the makeup of voters, citing:

Clinton won 65% of Latinos and only 54% of women. Even more surprising, Clinton only won 51% of “White women college graduates”.

The comment I wrote in response is pictured below… but you’ll notice the red exclamation point next to it:

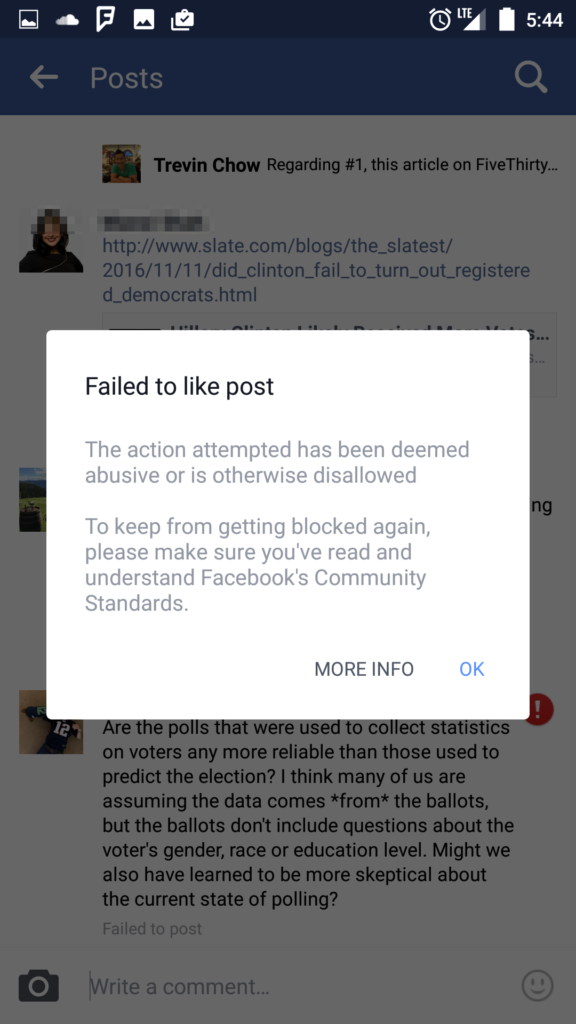

If you read the comment, I think it’s pretty clear that it’s civil… you might even go so far as to say that it’s thoughtful and furthers the discussion! But… you won’t see that comment on Facebook:

It was “deemed abusive or otherwise disallowed” by Facebook’s algorithms (it was too fast to be done by anything but a machine).

It reminded me of two things:

- Mark Zuckerberg calling it a “crazy idea” that the spread of fake news on Facebook influenced the election. Obviously this post isn’t about fake news, but it’s a conversation about how trustworthy polling is. And, Facebook blocked that conversation from occurring.

- Having some flashbacks to my time in China where it was well known that WeChat had certain keywords that would get you flagged or banned.

Of course, this could be just a bug. But, it was a good reminder to me that Facebook is not mine. Your Facebook wall is not a blog under your control. Your Facebook messages are not private communications between you and the recipient. Your speech and behavior within their walled garden are subject to their terms and conditions.

I have basically accepted the echo-chamber that comes from seeing things shared from people you already associate with and identify with. But, I’ve been increasingly concerned about digital redlining, and only recently have considered the fact that I’m not even seeing the “real” echo chamber, but some subtly modified version of it.

Wow, Tom thanks for posting! I saw someone else on facebook had a comment blocked from a liberal perspective, its never happened to me.

Do they give you any further explanation of the blocked comment?

I didn’t write them… I just googled the error and saw posts from others who’ve reported the same thing in forums and been unsure as to why. Facebook has a help page which is pretty high-level: https://www.facebook.com/help/216782648341460

Honestly I think it’s just a bug… it’s not in their interest to discourage sharing and participation. But, there’s no mistaking the fact that they can silence whatever they want (intentionally or unintentionally).

Tom: Very interesting. In this election year I’ve read articles about google search results vs yahoo being biased for one candidate or the other.

I never even knew Facebook could block you. Apparently George Orwell was right. 🙂

John O